#30. Princesses, Frogs, AI Engineering, and the Moon

Can't have a newsletter from Kay without some mention of the moon

Hello,

Here's a fairytale I heard today:

A princess is down by the pond playing with her favourite ball, when it rolls away and falls down into the water. She starts crying, and a frog appears. The frog asks her what's the matter. She says she's ever so sad about her ball being lost forever. And the frog says, well don't worry, I will go and fetch it for you. But if I do, you have to let me eat off your plate and sleep in your bed.

She brightens up at this, and happily asks him to do it. The frog goes down into the water and fetches the ball up for her. She takes it and runs back to the castle.

Later she is having dinner with the king and they hear a knock at the door. The king goes to answer it and finds the frog there, who tells him about the princess' agreement. The king scolds the princess and tells her that she must keep her word. So the frog comes in and sits on the table and eats off of her plate.

The princess can barely stand to finish her meal, and instead retires to her room. When it is time for bed, the frog shows up again and reminds her of their agreement. She reluctantly puts him in the corner of the room. The frog protests that no, this isn't what they agreed, he wants to sleep on her pillow.

With this, the princess picks him up by his leg and throws him straight at the wall — and at that moment, he transforms into a prince.

Small things I've done

Watched the new Gladiators on iPlayer, it's really top notch. This was also another victory for Gladiator E in our battle to recommend each other things that we actually enjoy.

Had a really nice meeting with Jamie of Trans Positive Media Collective. I don't really go so far as to say cis people have a 'duty to stand up for trans people' or anything like that, but when it happens it does really make things feel a lot lighter. If you know anyone who works in media who cares about this stuff and/or who might benefit from networking with others who do, please send them the link.

Started playing Tears of the Kingdom... exciting! The voice acting never gets old.

Continued playing Cyberpunk 2077 — and confirmed my theory that if you play the game as a trans woman character then of the romances available: the gay man isn't into you, the straight man isn't into you, the gay woman isn't into you... but the straight woman is. Which is a very well observed and funny joke, intentional or not.

I also started playing Phantom Liberty and I'm having a good time so far. Accidentally asked out the president.

The rest of this newsletter is quite dev-heavy so feel free to skip down to the links if that doesn't interest you!

AI Dev

As some of you may know, I'm building a job board focused on routes into tech, entry level roles, etc. A few of the features I want to build in benefit from the use of AI language models, so I've been learning a lot about how to work with them. A few small insights:

Local models are psychologically useful

Carmack has this idea that you should invest in the necessary computing power to make experimentation feel 'free'. So when he started working on AI, he bought a powerful computer (high upfront cost) rather than using the APIs (pay per request). The logic is that if running your experiments costs even like £0.01 you might psychologically start to 'save money' by not experimenting so much. You don't really want this.

I've been using Ollama and Mistral 7B, which can run local on my M2 Mac, to experiment with. It's a fairly low power model, but it's enough for what I want provided that I combine it with some extra tricks. I think this is good? It means that my systems will require minimal intelligence and then only get better if I were to power it up? I hope so anyway.

I also got the more powerful model Mixtral working via the 4090 on my gaming PC and also via SSH from my macbook. It works pretty fast. I might try making that a bit more convenient so I'm psychologically more likely to use it.

It's useful to combine AI models with traditional computing

I think? So for example, if I'm processing job adverts, sometimes the metadata in the HTML can tell me what company is advertising the job and I don't need to use an AI to extract that information. This makes it notably more robust.

Buuuuut some job adverts are lying to you — they're actually recruitment companies posting the job ads and so the company name will be the recruiter. This isn't that useful for my purposes, so ideally I want the AI model to work out whether it's being slightly misled, flag that, and then mark the employer as unknown and the job as posted by an agency. All of this is also adversarial to the recruiter posting the job ad, who probably doesn't want to make this really obvious.

What I ended up with is something like this:

Does the AI model think this was posted by an agency? I give it a few examples of what to look for.

Does the job advert contain a few key snippets I'm looking for like "our client is".

Does the employer name contain the word "recruit"

If any of those are true, I blank out the employer name and mark it as an agency advert.

I also try to extract the tech stack, which I do by mixing two approaches. First I look through the job advert to see if any of the ~1k most popular technologies from StackOverflow Dev Survey are mentioned (unless they're really unsearchable like 'C'). Then I give the AI model that list, along with the job ad, and ask it to spot any I've missed. I then go through the AI's list, see if the ones it has found really do exist in the job advert, and then take the

Evaluations (evals) are useful, but the current tooling is limited

AI models are non-deterministic, or at the very least unpredictable, and this means you can't really test them like you can traditional systems. If they do exactly what you want 95% of the time, that might be a really great result. However, most test tooling assumes you want 100% passing.

The typical approach is called 'evals', which roughly means — you find a set of examples of data your system might need to work with, figure out what a good output might look like for each one, and then use an evals system to check each example through your real model and grade the result as a %.

I tried out promptfoo for this, but it didn't really satisfy me (yaml is annoying to work with, the assertions didn't feel powerful enough, and it only focused on the model itself rather than the wider system). My purposes didn't feel like what promptfoo was aiming at. I really wanted a system that was like a testing framework in that I could execute a part of the system and then evaluate the result, but also like an evals framework in that it understood the idea that we don't expect perfect results.

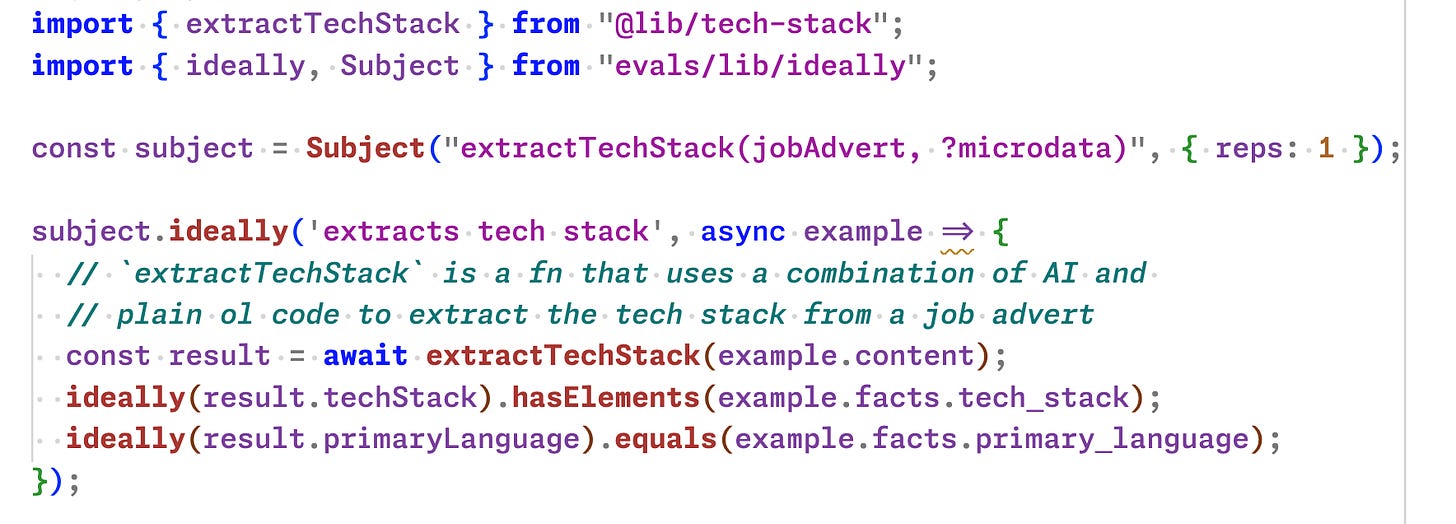

So I wrote a little test/evals framework that I've called ideally. The idea is that you define what your system should ‘ideally’ achieve, and the framework will measure that for you across a list of examples you provide. That word ‘ideally’ also allows us to use an ‘expect’-style syntax.

The test code looks like this:

And the system then passes in each in a set of examples in a separate folder. Here's one:

---

// This data is manually extracted by you as the 'truth'

primary_language: Java

tech_stack:

- Java

- React

- Python

- Django

- Javascript

---

// This is the example job ad

Junior Java Developer

Job Type Permanent

Description

Working part remote (3 days from home), we're on the lookout for a Junior Developer with commercial Java experience (6 months will suffice).

You'll be running projects from day one - as it's a relatively small software house with 18 staff, you'll need to be able to work in a collaborative environment and show a willingness to learn Javascript libraries such as React. Experience with Python/Django, whilst not essential, would be an added bonus.

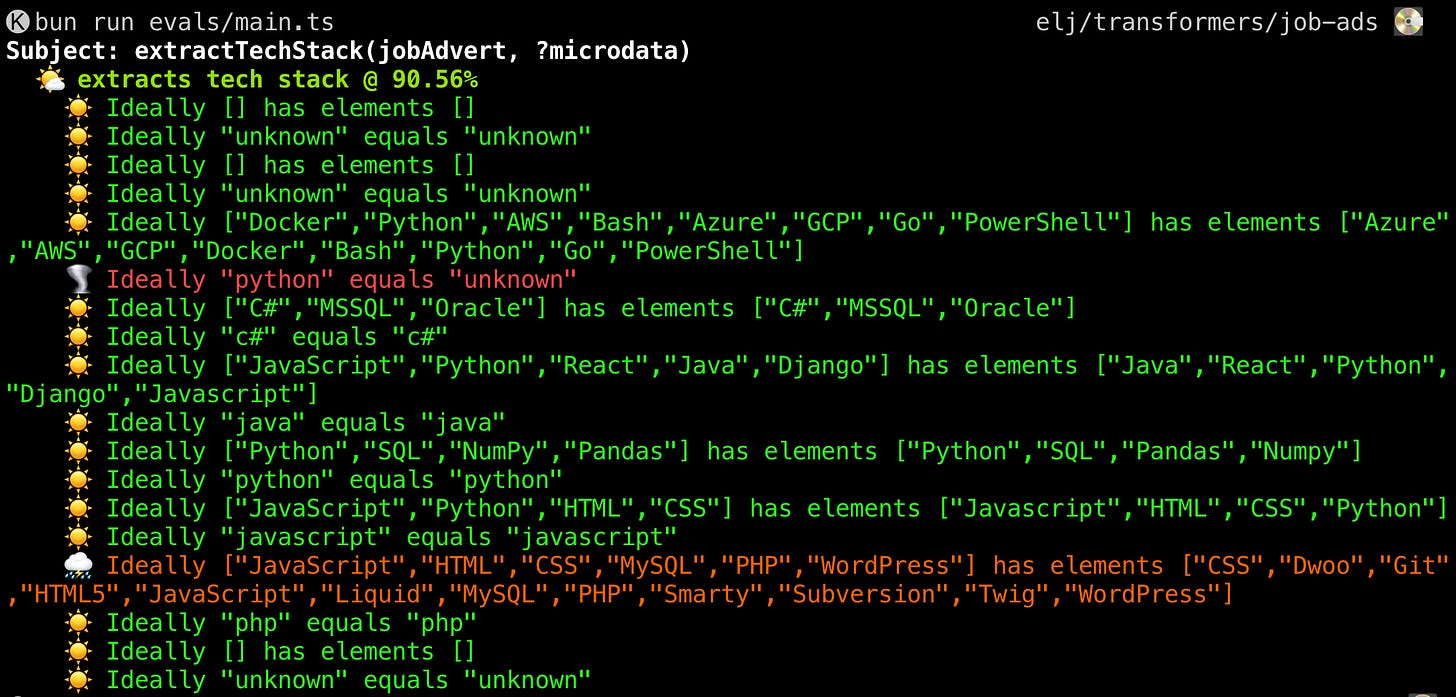

// etcAnd the output looks like this (across ~9 examples like the above)

As you can see, I've used weather emojis and colour to reflect how 'good' or 'bad' the result is. The tornado there means the model has misidentified Python as the main programming language when it isn't, and further down you can see there's a dark orange and a stormy cloud to reflect that the model has missed a lot of technologies in a different job ad.

There are still a lot of rough edges to be smoothed out, and I don't know if I'll end up releasing the evals framework itself, but it has been an interesting thinking about the problems.

Links

I saw a speedrunner with a heart rate monitor hit the 180s the other day, so got curious as to the max speed of a human heart. The theory goes that the max is 300bpm, but there was a case example of one person's heart going at 600bpm!

A nice concise blog post on How to explain anything to anyone, probably aimed at a technical audience. Nothing surprising if we've talked about this before but nicely crystallised here.

Nigerian engineering students’ favorite teachers are Indian YouTubers. The phenomenon of Indian teachers on YouTube is an interesting one, in part because if you follow a certain sort of teaching orthodoxy, the style of teaching they typically use — mostly straight chalk and talk — shouldn't work. To be fair there isn't much rigour behind 'works' here (yet) but, personally, I wouldn't be surprised if it was effective (I personally think lectures aren't intrinsically bad, just hard to get right). Many students seem to think so. They're now on my watchlist!

How Slow Scan TV Shaped the Moon. Really nice article, including a demo, of a kind of information transmission I'd not come across before! You plug a camera into an audio speaker, play the sound down the radio, from the moon say, and then someone at the other end with a radio receiver plugs it into a TV and there you go. It's a bit more complicated than that but that's the idea. And the dreamy quality of the resultant footage now in some way informs our imagination of what the moon is really like.

"Star Trek mission carrying actors' ashes to Moon 'crashes in flames above the Pacific'" OK so firstly, we're shipping dead people to the moon now apparently? Is this good? Navajo people don't seem to think so. I looked it up and apparently one guy is already there from a previous mission. This time the spacecraft didn't work so they all just fell back to earth on fire, incl Arthur C Clarke, Scotty, and also 67-odd other people (presumably only in part?). What. Stop firing dead bodies at the moon!

Next time, I won’t forget to write about a new concept I’m working on: teaching effects.

Have a great week!

K